Tales of the Night, summary of a technical supervision

Par Narann le jeudi, 21 juillet 2011, 16:41 - Infographie 3D - Boulot - Lien permanent

This is an english traduction of a post I've made some time ago.

This is an english traduction of a post I've made some time ago.

As you maybe know, the feature film Tales of the Night (Les Contes de la Nuit in french) from Michel Ocelot (Kirikou, Azur et Asmar and others) has been released in August.

I was fortunate to supervise the technical aspects of production. Beyond that I think this film is a real artistic success, it was definitely one of my most beautiful experience. :sourit:

In this post I purpose to go around the means in place to achieve that beautiful, what do I say? Wonderfull project!

(And do little advertising too :siffle: )

Note

Although the content of this blog is licensed under a Creative Common, all images on this post are the property of their respective authors. Violators may be subject to prosecution and could be kidnapped in their sleep, stoned in the public place and deprived of candies. :gniarkgniark:

How I arrived on the project

First, replacing the context seems to me interesting.

All start in the end of 2009, I'm working in Def2Shoot the animated feature Yona Yona (old project name). Following several french-Japanese production meetings, the choice to do animation of the main characters in Maya and sets in XSI is made. I find myself being the only TD in Maya (D2S is on XSI).

Franck Malmin (read his post-mortem, really honnest, about the film, in fr), D2S CEO , purpose to the Yona Yona Surfacing TD's to help "a production" to start. This one begins to spend few afternoons in Nord Ouest Film, where a part of "Michel's team" is already in place.

At that point I knew no more. But difficulties that would encounter Yona Yona began to be felt and production ask for the full-time return of his surfacing TD on the project.

In Def2Shoot, the Maya part is less of a problem (thanks to the arrival of a second TD) and Frank asked me to continue the work begun at Nord Ouest.

It was at this time I meet peoples I knew as the "Michael's team", all great persons. :sourit:

At first, my job was not to last long and was very basic. I had to understand the project needs and refer them to quick solutions (help to convert mental ray's .map because some matte painting was monstrously large, define a folder hierarchy and explain the "why" and the "how" of each file, etc. ...).

Despite the rather limited technical needs that require this project (Tales of the Night, it's not Transformers with raytracing everywhere), the team, actually rather small and composed of few no technicians, could, in long-term, end up spending more time dealing with technical problems rather than really work peacefully. :reflechi:

There was still, at that time, 10 episodes of 13 minutes each release in full HD.

This concern was shared with the rest of the team and after few conversations between Frank and production, it was decided that I will continue the project until the end (the project lasted one year and a half and it should be noticed that deadlines were met).

I was now officially part of the team!

Drastic measures

This decision will quite change my involvement on the project and I could see "farther", do things more advanced, manage more complex cases etc...

Michel Ocelot's Director sssistant came from 2D and was used to do a heavy amazing preprod work. Thus, storyboard and directing was already made and technical stripping was already in Excel files of the director of production (sorry, I don't really know english terms for this) even before an episode would only go in Layout.

This kind of thing quite assured me because have to think about changing everything at the last moment (very too present attitude in advertisement and the cinema aka "à l'américaine") would not led me to take some risks improving the production and it conditions. Naturally when you have some assurance that what you want to do is what we gonna do, you provide less time for "bad crafting".

That's when I could begin defining the outline of the workflow.

Use scripts

Let's don't turn around the bush: Scripting was everywhere no one dared venture. :sourit:

The importance of a technically coherent folder hierarchy was thus essential in order to automate tasks, while keeping in mind that it would be handled by "human" and, therefore, should also remain comprehensible.

The preferred language was MEL in all Maya aspects (I was not yet comfortable with "Python in Maya") and Python for everything related to file manipulation (os and re are your friends).

Scripting usage was mainly made for "crossing points" of information/files. I will often refer about it during the rest of this post because I almost passed this production my fingers on the keyboard. :pafOrdi:

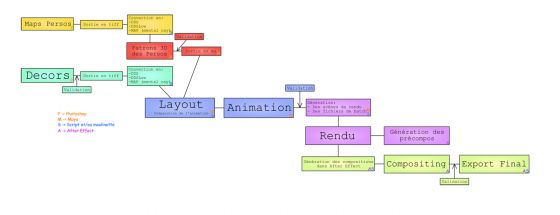

Pipeline summary

Decorators

"Decorators" (mappers) made their sets in Photoshop (in very huge and very heavy files ^^ ), they exported their work in different layers in tiff in a dedicated folder following ad naming convention specified in advance.

At first, they converted their .tiff in .map (and .dds) themselves but this highly repeteative task took time. So I quickly made script that was going through all textures of the current project and checked that each .map (or .dds) was older than her .tiff. Otherwise, it made a conversion. :dentcasse:

Characters

I wasn't directly involved in the characters creation.

A 2D artist did the Photoshop version of the characters, burst them on a plan, a character TD, temporarily joint bye an animateur had set up an automated rig system to generate animable characters.

Character maps was converted the same way than decors.

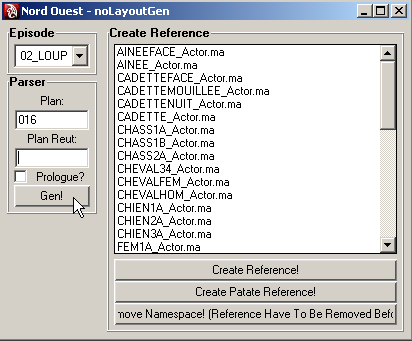

My part, in the pipeline started at Layout stage with a tool to list characters and import them in the Maya scenes depending on the episode we was working on.

So it was a big communication work to know who list and in which case do not list a particular character.

Notice about .dds format

Michel Ocelot validate animation on playblasts. So it need that playblast look "much as possible" near the final render.

For this, textures used in the scene had to be thoses used in render, dealing with a resizing.

So I've coded a CgFX shader which I gave the .dds texture versions to reduce their load in GPU memory.

This technique worked very well on sets but a bug I was unable to resolve made some textures disappear during controller movements of the characters.

I had to hack a kind of surface shader for the viewport but we loose the advangate of .dds textures because it seems that Maya uncompress and place the entire texture in GPU memory. :pasClasse:

It's a shame because it was character textures size that take more memory actually. So I had to make some "low" version of .dds texture (which already was in low def) and a tool to switch between versions (some shot had a lot of characters)...

Layout

So I've made the character importer but also a "set generator". Each shot in the film is actually a layering of maps:

As file naming convention was clear, it was very simple, using a script, to know where were the different layers of the current shot.

It was enough then to specify the episode number and the shot number we want to generate, and click ok.

A set rig was imported and adapted to the shot. Layers of set maps was load on their own plane and the camera's image plane displayed the animatic exported from the montage.

All that remained was "only to" put the characters.

I've also write a "transfer to shot" script to make layouters don't have to reposition every character whereas their was in the same position two shot later...

Animation

There was nothing "pipelined" concerning the layout's scene publication. Animators just copy the layout scene and start to animate they shot from this.

I've coded a character panel which was mainly used for hands. Hands simply textures on faces displayed/hidden during the animation.

Hand textures and numeric attributes for "display/hide" followed naming convention. So, each time a new hand was created by mappers, I just had to add a line in my panel's code to make everything appear in the panel.

However, responding to the render automation constraint required that scenes was published in specific folders where the script could find them.

So I've made a publication tool which save the current Maya scene and a little empty file (who played the role of "marker" to specify this scene had just been republished).

Rendering

There was no human need for this part. All had been scripted by me.

I had to launch a script getting the whole scene from animation who had a "marker", open them and apply another script which do many things (some examples):

- Change texture path (replacing ".dds" by ".map" for examples).

- Change texture options (to use elliptical filtering).

- Set optimal render parameters.

- Go lookup, in a text file, if the actual shot had motion blur and activate it.

- Apply some modifications (animation offset of hands of 0.5 frame if render with motion blur to avoid "poping effect" during image rotation, hide some visible controlers, etc...).

- Do some hack depending of the episode/shot (apply a different blur to the "Garçon Tamtam" hands for example).

- Generate job file containing command lines to launch (we used Smedge) and a .bat for special case we need to render locally.

- Remove the "marker" file.

- Save the "ready to render" scene in the good folder.

When lunch time came, artists launched a Smedge client on they worksation to let renders be computed.

Mental ray was the main render engine and it did it well (it was my favorite render engine...). Once the .map created and the elliptical filter set, everthing was fine!

Compositing

Compositing était fait sous After Effect et se divisait en deux parties. Compositing was made with After Effect and was divided in two parts.

- A first one containing every "a little special things" (smoke effects, morphings, some particular animations, etc...) was done by a 2D animator.

- A second one representing the most of the shots (80%) was mainly quick improvements, global effects (depth of field), etc...

I've made a After Effect script (my first one) who created a compo for the shot, import every layers, stacked them in the good order and created an export set.

It was also using this script we could quickly had "raw" renders: We launched this script for a list of shot to render, click the "Render" button and the computing of everything just start. It only remained to check the editing project (Final Cut) which was automatically updated.

A second script (used by the first one actually) generate export sets from selected compos in After Effect. This simplify compositor life which never loose time to correctly export they work.

All the rest

Of course, there was many little task to do during the project:

- Episode backup.

- Disque space management on servers.

- Technical storyboard reading.

- etc...

Passage du stade de série au stade de long métrage en relief From a serie to a relief full feature film

Yeap! Because this is what make this project interesting. The original idea was to do a full HD serie for a french TV channel (Canal+) and it terminate with a full feature film in relief. This is not something you work on everyday. :redface:

I remember that it's following a projection for Canal+ that the idea of a full feature film has begun, without be really official (there was no talk about relief at this time). In practice, this change really few things for us.

But when we started to talk about relief, things have changed a little. Mac Guff was on it. We gave them some shots sufficiently representative of the movie and they've done "real" (not-like-clash-of-the-titans) relief.

I insist on the word "real" relief because Mac Guff had all layers (and our After Effect files) and was able to work the relief without having to artificially recreate the depth.

During the first projection we were 5-6 et I must say I was really impressed by the result. The depth and the black gave a real theatrical effect. The relief team had done an excellent job and I think everyone was won over. (Avatar could go get dressed, he'd just take a low kick reverse rotation there! :baffed: )

Once the relief has been officially validated, the number of layer had exploded. It happened that After Effect projects beating down our little network. Finally, compute images locally was a simple and cheap solution that solves in part the problem.

The production is very well done, without pain and it's rare enough to be notified.

What I would have liked to do better

Of course, with retrospective, I think that some points would have deserved a little more attention.

- I wish I solve the texture problems (cgfx + .dds) that "pop" in the viewport when animators were moving their controllers. The solution found was not optimal and would increase the Maya load when there was a lot of characters. seg faults were too recurrent...

- I've choose to use .png at every step of the project (excepted Photoshop because .tiff file format was the only one that keep alphas during .map conversions) because this format was very "simple" (RGBA and that's all!) and I wanted to avoid the .tiff which was much more "opaque" (many kind of possible compression, number of channel, can't be opened everywhere, etc...). This is something I see as a "rookie" mistake now because the .png file format is extremely slow to open. The. tiff, if it is well managed in all steps of the pipeline, offers a much better speed.

- Probably other things...

Conclusion

As you maybe understood, this was a great both professional and personal experience. Work with Michel Ocelot is very pleasant.

It was also a first technical supervision for me on a feature film.

The movie will be 8 times nominated to the International Festival of Berlin which is still a great achievement when I remember the actually low number of peoples who worked on this project (compared to other productions of film animation).

Press reviews are very good and I hope this post, a little technique, will make you want to see this film made with love. :sourit:

My favorite tales? :sourit:

- The Werewolf: Because the work done on the leaves makes great stereo.

- The Chosen One of the Golden City: Because in the movie theater, with the music, your adult heart will necessary let fall some tears of happiness.

See you!

Dorian